Researchers from ETH Zurich discover simplifications within the design of deep Transformers, aiming to make them extra sturdy and environment friendly. Modifications are proposed by combining sign propagation principle and empirical observations, enabling the elimination of varied parts from customary transformer blocks with out compromising coaching velocity or efficiency.

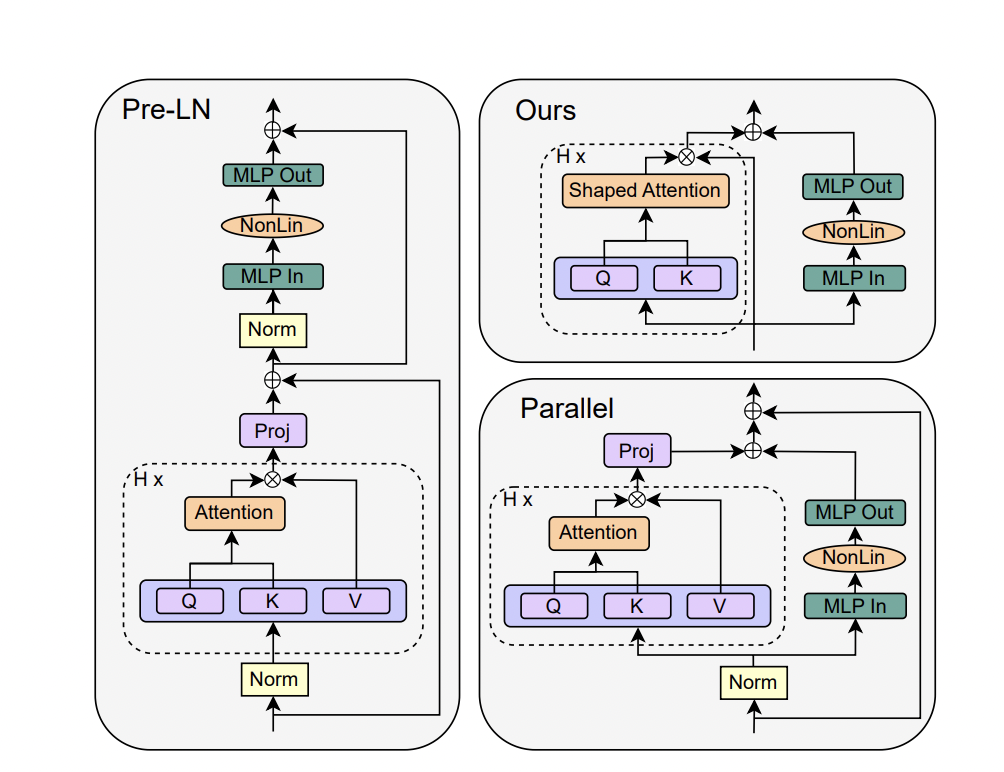

The analysis presents a examine on simplifying transformer blocks in deep neural networks, particularly specializing in the usual transformer block. Drawing inspiration from sign propagation principle, it explores the association of an identical constructing blocks, incorporating consideration and MLP sub-blocks with skip connections and normalization layers. It additionally introduces the parallel block, which computes the MLP and a spotlight sub-blocks in parallel for improved effectivity.

The examine examines the simplification of transformer blocks in deep neural networks, focusing particularly on the usual transformer block. It investigates the need of varied parts throughout the block and explores the opportunity of eradicating them with out compromising coaching velocity. The motivation for simplification arises from the complexity of recent neural community architectures and the hole between principle and follow in deep studying.

The strategy combines sign propagation principle and empirical observations to suggest modifications for simplifying transformer blocks. The examine performed experiments on autoregressive decoder-only and BERT encoder-only fashions to evaluate the efficiency of the simplified transformers. It performs extra experiments and ablations to review the influence of eradicating skip connections within the consideration sub-block and the ensuing sign degeneracy.

The analysis proposed modifications to simplify transformer blocks by eradicating skip connections, projection/worth parameters, sequential sub-blocks, and normalization layers. These modifications keep customary transformers’ coaching velocity and efficiency whereas reaching sooner coaching throughput and using fewer parameters. The examine additionally investigated the influence of various initialization strategies on the efficiency of simplified transformers.

The proposed simplified transformers obtain comparable efficiency to plain transformers whereas utilizing 15% fewer parameters and experiencing a 15% enhance in coaching throughput. The examine presents simplified deep-learning architectures that may cut back the price of giant transformer fashions. The experimental outcomes assist the effectiveness of the simplifications throughout numerous settings and emphasize the importance of correct initialization for optimum outcomes.

The beneficial future analysis is to analyze the effectiveness of the proposed simplifications on bigger transformer fashions, because the examine primarily centered on comparatively small fashions in comparison with the most important transformers. It additionally suggests conducting a complete hyperparameter search to boost the efficiency of the simplified blocks, because the examine solely tuned key hyperparameters and relied on default decisions. It proposes exploring hardware-specific implementations of the simplified blocks to realize extra enhancements in coaching velocity and efficiency doubtlessly.

Try the Paper. All credit score for this analysis goes to the researchers of this venture. Additionally, don’t overlook to hitch our 32k+ ML SubReddit, 41k+ Fb Neighborhood, Discord Channel, and Electronic mail Publication, the place we share the most recent AI analysis information, cool AI initiatives, and extra.

In the event you like our work, you’ll love our e-newsletter..

We’re additionally on Telegram and WhatsApp.

Howdy, My identify is Adnan Hassan. I’m a consulting intern at Marktechpost and shortly to be a administration trainee at American Categorical. I’m presently pursuing a twin diploma on the Indian Institute of Know-how, Kharagpur. I’m keen about expertise and wish to create new merchandise that make a distinction.